This article discusses the problem of diminishing keyword data and suggests innovative ways of using the available data in the future.

Key items:

- The value of long-tail keywords

- Encrypted search gaining momentum

- Decreasing amount of available keyword data

- Google’s tools process keywords to protect user privacy

- Working with available data to make actionable plans

The Value of Long-Tail Traffic

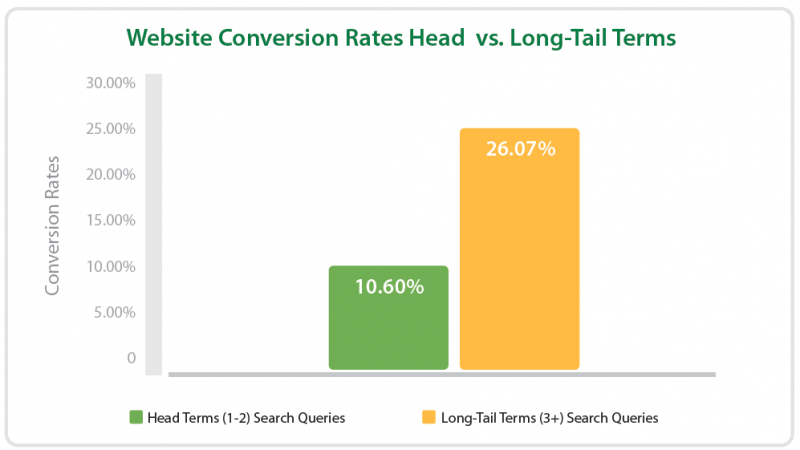

Numerous studies performed around long-tail traffic highlight volume, conversion rate and ranking potential as two of the most significant benefits of this keyword segment. A whitepaper published by Conductor states that the average conversion rate of long-tail traffic is 2.5 times higher than that of head terms.

Prior to writing this article I conducted a quick survey on Google+ to verify that all I’ve read and experienced so far is true. The collective answer could be translated in to the following two words: it depends.

Search Query Encryption

Google’s SSL search ((Google – SSL Search, http://support.google.com/websearch/bin/answer.py?hl=en&answer=173733)) has been gaining momentum since late 2011 ((Making search more secure, http://googleblog.blogspot.com.au/2011/10/making-search-more-secure.html)) and (not provided) now represents a significant chunk of everyone’s keyword data in Google Analytics. Turning to raw log files or own .js based referrer string tracking won’t help either as keyword information won’t be there either.

With encrypted search you’re just not losing the data from some of your users. You’re losing 100% of keyword data from an entire segment of users who are logged into anything Google including Chrome, Gmail, Google+ or simply Google search (exception being that a fraction of those searches may be performed by the same user only from different devices and locations, without logging in). So what you are left with is the other segment which implies a whole new set of characteristics and behaviours.

The Future of SSL Search

You may not like this but we’re likely to see encrypted search dominate in the near future, particularly as we know that Google is explicitly pushing in that direction:

“The mission of the search growth marketing team is to make that information universally accessible by enabling and educating users around the world to search on Google, search more often, and search while signed-in.[…] the more users that are signed in to Google, the better we can tailor their search results and create a unified experience across all of the Google products that they use.” ((Google Jobs – Product Marketing Manager, Search))

Google Webmaster Tools

The good news is that we have Google Webmaster Tools which shows a lot of data which is generally (not provided) in Google Analytics, better still, the two are now integrated. I am a huge fan of the tools and always try to find innovative ways to use the data available to make actionable strategies, but there are a few things about it that bug me.

Search Query Removal

If Google “feels” that a particular search query may reveal personal information they will not include it in the list of top search queries. This could be searches for personal data, email addresses and the usual random queries which may reveal identity and sensitive information.

Additionally personalised search may involve search for content shared with limited circles on Google+ and a fraction of that content may be revealed to the webmaster. It’s also safe to say that any very low-volume search queries fall into that category as well.

“To protect user privacy, Google doesn’t aggregate all data. For example, we may not track some queries that are made a very small number of times or that contain personal or sensitive information.” ((Google Webmaster Tools Help))

Search Query Camouflage

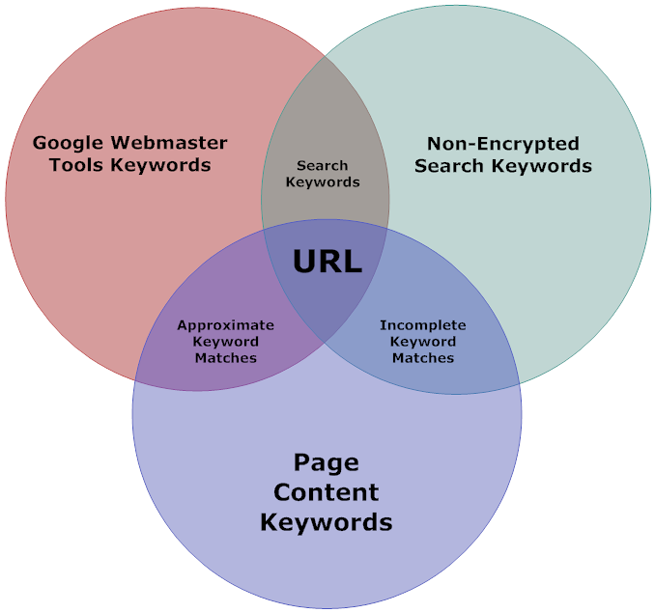

Another thing which is not obvious straight away is that Google Webmaster Tools data is merged info similar groups. For example any of the surrounding search terms end up displayed as the search phrase in the centre of the following diagram:

At first you may think that any of the four phrase variations are missing from your dataset. Instead they’re camouflaged behind the “canonical phrase” and contribute towards its total numbers including:

- Impressions

- Clicks

- Position

- CTR

- Change Parameters

Data Limit

Finally Google Webmaster Tools top search queries are limited to 2000 search phrases:

“Specific user queries for which your site appeared in search results. Webmaster Tools shows data for the top 2,000 queries that returned your site at least once or twice in search results in the selected period. This list reflects any filters you’ve set (for example, a search query for [flowers] on google.ca is counted separately from a query for [flowers] on google.com).” ((Google Webmaster Tools: Search queries, http://support.google.com/webmasters/bin/answer.py?hl=en&answer=35252))

This means that you are likely to recover only a fraction of the lost keyword data if you run a very large site (for example an online store or a catalogue).

Disconnect

Naturally, with Google Webmaster Tools data we’re not able to understand individual navigational stemming of different search queries.

Working with Available Data

Since we have no ability to impact the trending of encrypted search nor influence how Google protects user privacy in Google Webmaster Tools, we have to adapt and use what is available to us.

Defining Keyword Types

Annotate similar keyword groups and tag them. This will give you the ability to learn about characteristics of each keyword group (for example compare conversions) and adjust your site architecture or content accordingly.

- Branded (own)

- Branded (product search)

- Navigational query

- Information query

- Location-based

- Search vertical (image, video, mobile)

- Campaign-specific

Backup Everything

Google Webmaster Tools don’t archive all your data forever, search queries with change metrics are there for only 30 days and you get 90 days of search query data without change metrics. It’s a good idea to store this data in Google Drive or locally. There is an option to download as CSV or to Google Drive. Since I deal with so many websites I’ve developed a tool which does the data backup automatically and is able to display unlimited data range the search query timeline. The tool is currently in beta testing, if you’re interested in free access let us know.

Create Your Own Search Index

Using 80legs you are able to crawl your website and create a keyword index with varying degrees of complexity (even analyse document titles separately from the main document body). With this data you can create both keyword groups and page-specific keywords. When users arrive on your page and the keyword is (not provided) you can apply the principles of attribution modelling to give credit to the most likely keywords which brought the traffic to that page.

The data could be refined further for the sake of estimate accuracy by a parallel feed of non-encrypted search queries. These won’t last forever so sooner you start recording and backing things up the better. With this strategy you can work with zero keyword data from your search traffic.

Potential Calculation

How to find a keyword which can realistically move up in result and bring the highest conversion on minimal effort?

Here’s the logic we use:

- Look at the keyword impressions, clicks, position in results and the CTR

- Calculate the CTR average per each position

- Calculate phrase CTR estimate by normalising the data specific to that keyword (factoring the deviation from the norm)

- Observe the strength of the competing result

- Express all of the above as a single metric

- Optional: Attach a goal conversion rate and value to the phrase to get financial outcome estimates

We’ve automated the calculation process with a custom-built tool refined to the point where we not only have ranking outcome estimates but also a single metric to express the keyword potential, almost like PageRank – only useful. The version of the keyword potential calculation tool which includes a the new potential metric is scheduled to be released next month. If you’d like an early test-drive let us know.

Ask Users

Remember, you still have the ability to ask your users (e.g. at the end of the signup process, enquiry form or store checkout) what they typed in to find you. It could be an optional field in your standard “How did you find us?” question. They may not remember the exact phrase they used or even bother with helping you out but any extra data you gain is better than nothing.

What’s your plan?

I’m keen to hear what tactics our readers are using to handle keyword analysis in the encrypted future. Let us know on Google+.

Dan Petrovic, the managing director of DEJAN, is Australia’s best-known name in the field of search engine optimisation. Dan is a web author, innovator and a highly regarded search industry event speaker.

ORCID iD: https://orcid.org/0000-0002-6886-3211

Hello Dan, I have come across your blog while touring in the blog land. I am quite impressed with your article. As being a Social Media Expert, I think “Long Tail” KWs will do the trick for our business website. Even if you check in your analytics or google webmaster tools, then also you can see that many long tail relevant KWs will appear. After panda and penguin updates, I realize only one thing and that is – keep concentrate on Quality not on the Quantity. So if you create backlinks by means of Long Tails KW – it will be helpful for your site. Anyways, thanks a lot for your lovely post – love to see more from you. 🙂

To compete with big branded websites, long tail keywords might be the only way to get found on the internet for new start-ups. I created a small blog days ago using two long tail keywords, and found my blog on the page 1 of Google for the same keyword query after a week. Not for showing off here,(could be pure luck, )but I realized how effective the long tail keywords could work for you. This is a busy morning, I bookmarked this page, will revisit soon.