Earlier this year I wrote about the problem of diminishing keyword data and offered a number of solutions to overcome this problem. Today I will highlight one specific method of keyword and position mining – server log files.

These days there is no such thing as an absolute ranking position. Different users will see you on different positions in search result based on any number of factors including algorithm variations, user location, device/platform and personalisation. This means that scraping Google from the same machine and measuring your search visibility isn’t the ideal method.

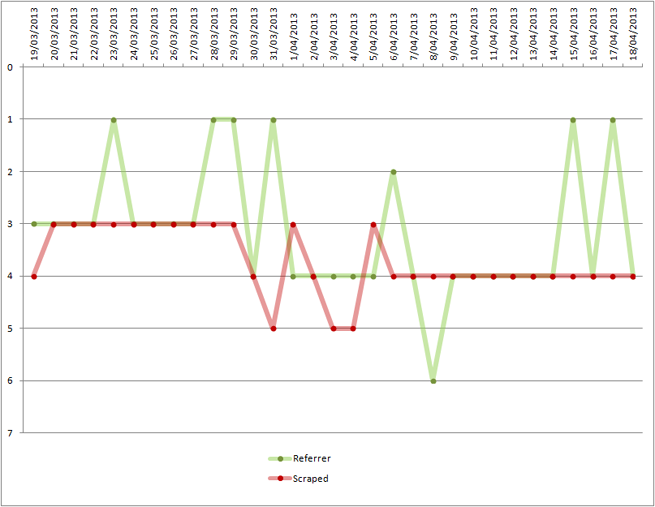

Let me illustrate what I mean by showing you our own ranking data from two different sources for keyword “SEO” in Google Australia:

The above is a comparison chart of scraped Google results (red) and the raw server log files (green) and is a great example of the difference between what you think is happening and what is actually happening in Google’s search results.

It’s Not All Lost

Most Google searches today are encrypted, however there is still a chunk of non-encrypted search traffic you can mine for data. This includes Internet Explorer users who are not signed into any Google services and Chrome/Firefox users who type in http://www.google.com and search from that point on (without logging in).

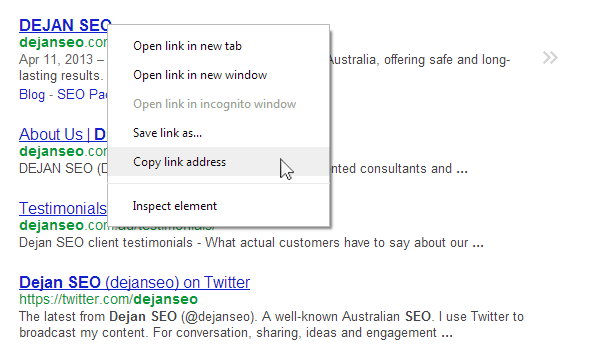

Try this query and copy the URL on the first result:

Paste it into a text file and you will see something like this:

http://www.google.com.au/url?sa=t&rct=j&q=seo%20dejan&source=web&cd=1&cad=rja&ved=0CEYQFjAA&url=http%3A%2F%2Fdejanmarketing.com%2F&ei=Ul1xUfukBIuaiAe-sICoAg&usg=AFQjCNFALxEZvNNdEYUygr2aEf09YRKX3g&bvm=bv.45373924,d.aGc

Interesting parts of this URL are:

- q=seo%20dejan (search query “seo dejan”)

- cd=1 (ranking position “1”)

Now switch this to https:// search and observe what happens to the URL:

https://www.google.com.au/url?sa=t&rct=j&q=&esrc=s&source=web&cd=1&cad=rja&ved=0CDMQFjAA&url=http%3A%2F%2Fdejanmarketing.com%2F&ei=S19xUcGYIYiSiQeN2YH4Bw&usg=AFQjCNFALxEZvNNdEYUygr2aEf09YRKX3g&bvm=bv.45373924,d.aGc

The ranking position is still there (cd=1) but the search query (q) is now gone.

Now that we understand the mechanism let’s reflect on what’s actually available and where it sits.

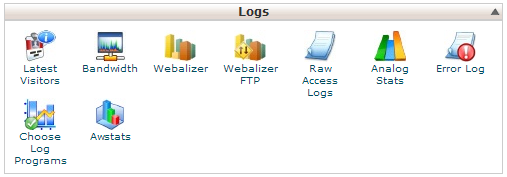

Getting the Data

Most servers are configured to collect raw access log files and some will allow you to easily access this data. Here’s how to find it in Cpanel for example (Raw Access Logs):

Here’s an example from our own log file:

109.149.xx.xx - - [19/Apr/2013:22:49:41 +1000] "GET /google-link-disavow-complete-guide/ HTTP/1.1" 200 17115 "http://www.google.co.uk/url?sa=t&rct=j&q=where+to+download+google+disavow+doc&source=web&cd=3&ved=0CEgQFjAC&url=http%3A%2F%2Fdejanmarketing.com%2Fgoogle-link-disavow-complete-guide%2F&ei=Nj1xUbbwD4nIPKT8gMAC&usg=AFQjCNHdfYgDAS2DT9MvV-Y7Hz1VZomYAg&sig2=YjxMevNdGpOcODRBcH57hQ" "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_8_3) AppleWebKit/536.28.10 (KHTML, like Gecko) Version/6.0.3 Safari/536.28.10" 351593

In the above example we can see that somebody searched google.co.uk for “where to download google disavow doc” and found this page on position 3.

Like I said earlier, you’re not always blessed with a combination of search phrase and the position, but when you do get them together you need to save that data and consider it in your phrase research and keyword performance tracking.

Naturally you don’t want to be doing this manually for every detected keyword/position combination so it might be a good idea to put together a script which automates the process and compiles the data for you in a format that you can use (e.g. CSV or XML).

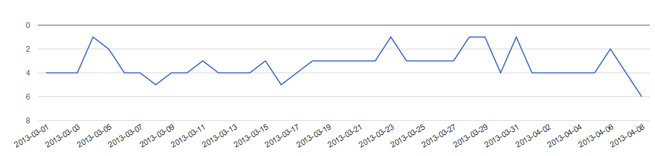

We use our own tool which does this for us on a daily basis and are able to track keyword performance through Google’s own search referral URLs:

Additionally we’ve introduced a range of filters (by search engine, keyword, date range…etc):

What I like about it is that it’s completely fresh data and I get to see it soon after the referrer string was added to the log file.

Data Accuracy

Our development team is currently working on combining server log file data with Google Webmaster Tools keyword reports in order to improve accuracy of search visibility and keyword performance. This will be done without acquiring ranking data in ‘traditional ways’ (read: SERP scraping).

Another interesting way of dealing with the disappearing keyword data was proposed by Alistair Lattimore and is based on attributing keywords to encrypted traffic based on page content. We’re considering this option as well, though it will probably involve creation and maintenance of a ‘mini index’ for each website we wish to track.

I’d love to hear your thoughts and and experience with keyword performance tracking and how you measure search visibility.

Dan Petrovic, the managing director of DEJAN, is Australia’s best-known name in the field of search engine optimisation. Dan is a web author, innovator and a highly regarded search industry event speaker.

ORCID iD: https://orcid.org/0000-0002-6886-3211

Hi Dan,

This is a really good discovery 🙂

Thank you for sharing this!

Seriously I was going to do the same thing. I was thinking of integrating Google search referrals and avoiding waste of time in getting rankings for keywords.

I think this is the best idea to go ahead.

Certainly I used to be gonna complete a similar matter. I used to be

organization developing Search engines seek referrals in addition to avoiding

waste material of your energy within receiving rankings with regard to

keywords.

I believe the greatest thought to go forward.

Hello Dan,

Great post

This is super amazing and well thought out Dan TA 🙂

Thanks for the great posts…Out of curiousity… if SERP scraping (Traditional ranking) is doomed, won’t companies that built products around this soon be out of business? AWR..Brightlocal, Bright Edge..Keywordspy, Spyfu, SEMRUSH…What is your opinion?. RavenTools & SEOMOZ have already dropped ranking tools.. I see many creative methods to move around the pink elephant (Ranking) in the room. (Thank you).. But most of these solutions are expensive to implement (Time-Programming) – for an SMB this is not an option (Paying for it). PS: The next shot across the bow, was Google’s removal of the related searches tool – from the UI http://www.blindfiveyearold.com/google-removes-related-searches

really a good one, thanks for sharing

I agree with you that different users will see you on different positions in search result

based on any number of factors including algorithm variations, user

location, device/platform and personalisation. I really enjoy reading your post. keep posting. great thanks.

Hi can you elaborate more on Alistair Lattimore’s method on attributing keywords to encrypted traffic based on page content.

Hey, Thanks for the useful suggestions on tracking ranks via log files. will try it out!

Hey, Thanks for the useful suggestions on tracking ranks via log files. will try it out!