Theory behind evaluation of search result quality through algorithmic treatment of the web index. Read on and discover some of the fundamental forces behind search engine rankings.

Part 1 of the Search Quality Series by Dejan SEO

Introduction

There are different types of graphs on the web, useful to search engines in processing and evaluation of relevance and reputation of pages in their index. Here are examples of graphs currently being utilised by search engines:

- Link Graph: Nodes (pages, documents) connected by directed edges (links).

- Co-Citation Graph: Nodes connected by undirected edges (A & B are connected if C links to both)

- Social Graph: Explicit and implicit connections between individuals on the web.

- Knowledge Graph: A collection of interconnected canonical entities and their attributes

Connectivity-Based Ranking

Query-Independent

Google converts its entire index into a graph in order to (among other things) calculate connectivity-based ranking for that collection. The first mode of connectivity-based ranking system is a query-independent ranking model based on document connectivity alone. This is where PageRank algorithm comes in handy. In a nutshell, PageRank is the value of probability of users staying or leaving the document. PageRank alone, however, is incapable of producing relevant results and simply reflects the perceived importance of the page on the web.

[blockquote type=”blockquote_quotes” align=”right”]A document which points to many others might be a good hub, and a document that many documents point to might be a good authority. Recursively, a document that points to many good authorities might be an even better hub, and similarly a document pointed to by many good hubs might be an even better authority. , Monika Henzinger, Google Inc.[/blockquote]

Query-Dependent

The second mode of connectivity-based ranking is query-dependent and capable of slicing the link graph into a relevant sub-set which can be re-ordered and re-ranked in a way more meaningful to a given search query [1].

In addition to a standard indegree model (inbound links) two additional factors are introduces to improve relevance of returned documents:

- Hub score

- Authority score

Both are part of the HITS algorithm which stipulates that hubs are nodes which link to pages relevant to the search query and authority nodes are the pages expected to have relevant content.

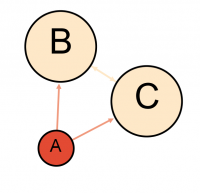

The concept of query-dependent / connectivity-based algorithm in action is illustrated here:

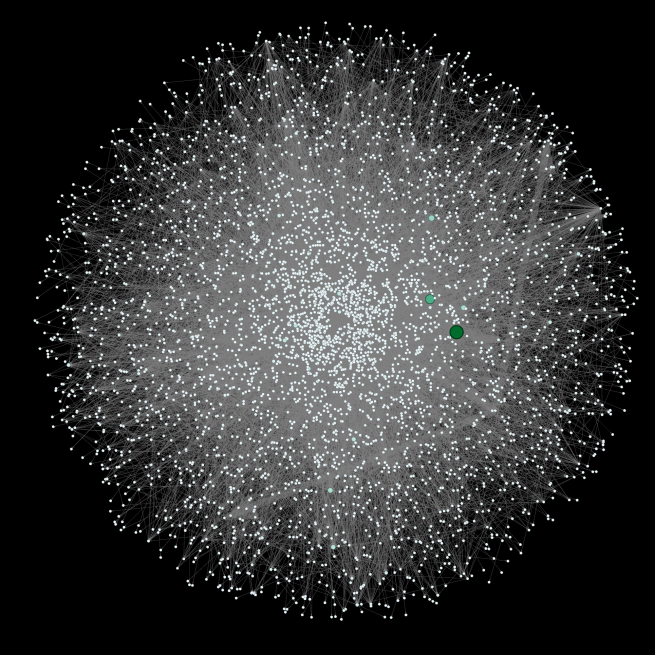

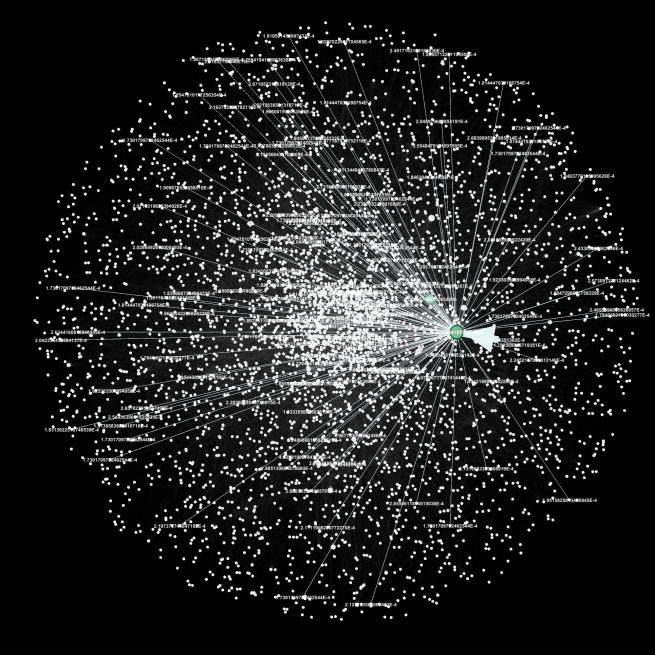

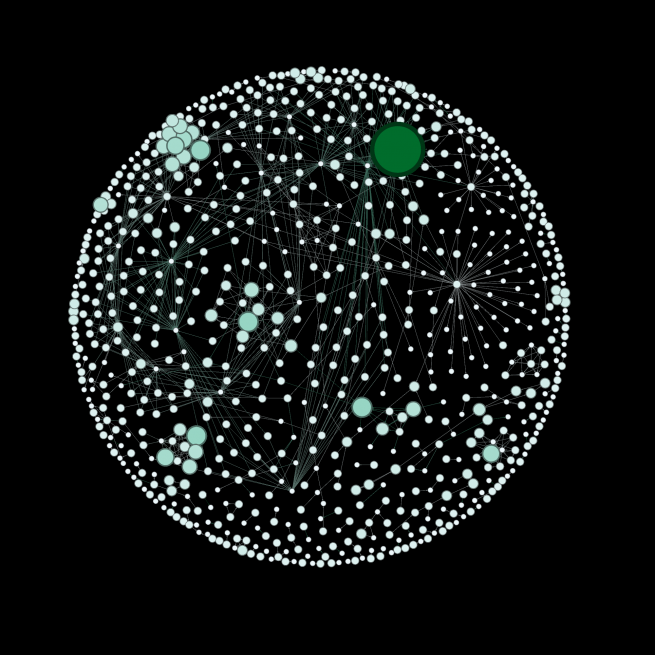

The above image represents a micro-web of not more than 10,000 domains and the ranking system is calculated on a query-independent principle. In the second example the algorithm identifies only those nodes which are found to be substantially related to the user query:

This allows for removal of query-irrelevant nodes and calculation of reputation only within query-specific context.

Simplified Visual Examples

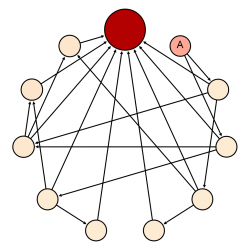

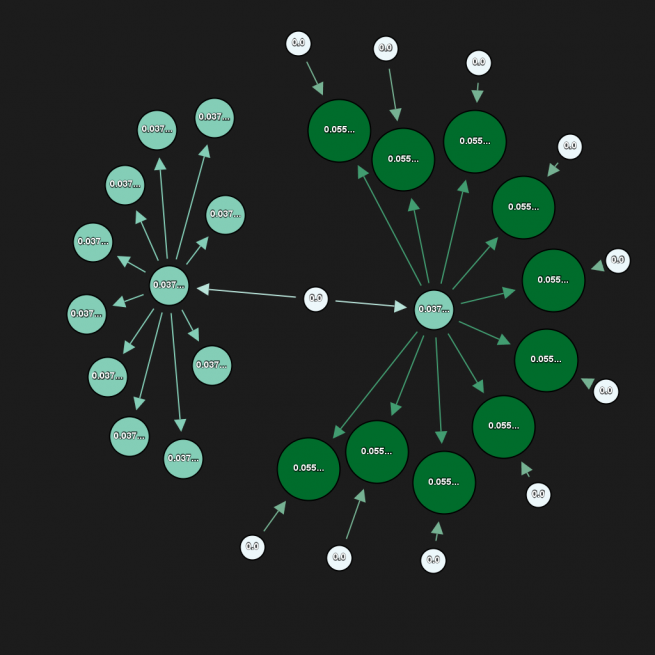

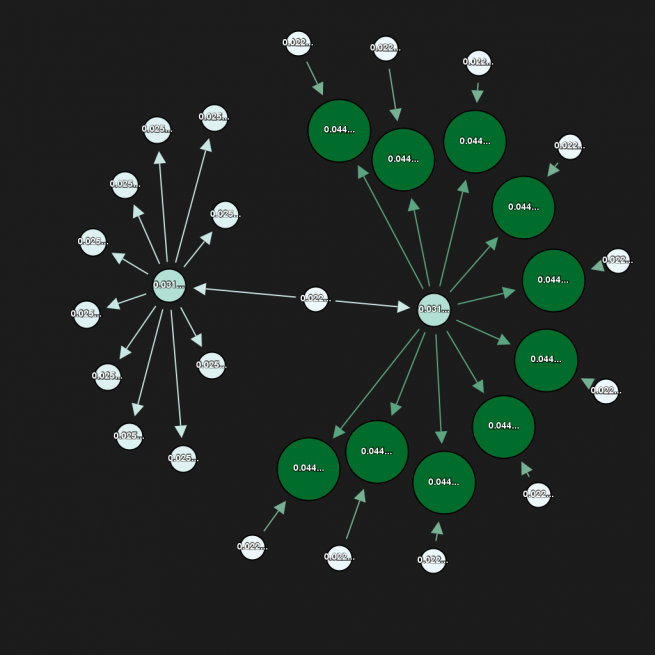

In the following graph we’re seeing authority in action:

Note that authority works on an egalitarian level and exhibits disregard for inbound link qualities. Another quantitative node analysis is hub detection:

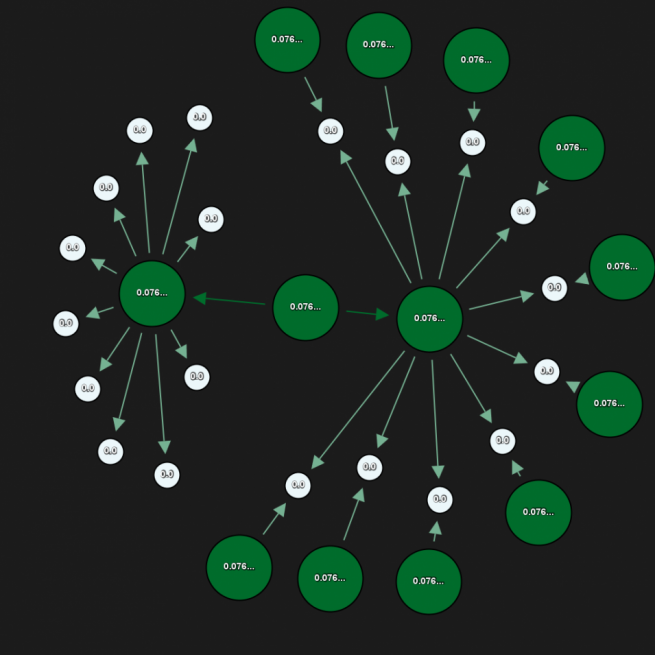

Finally, let’s see how PageRank solves the problem of equal count by introducing a more elegant way of ascertaining ranking value of documents in our collection:

A combination of all three will produce the best results with first two identifying topical relevance and the last one for sorting purposes. There are in total five key elements to Google’s algorithmic link analysis and processing:

- In-degree (inbound links)

- Out-degree (outbound links)

- HITS authority score [2] (quantity of relevant inbound links in a sub-set of the index)

- HITS hub score (quantity of relevant outbound links in a sub-set of the index)

- PageRank (random surfer model)

Top-Level Spam Detection

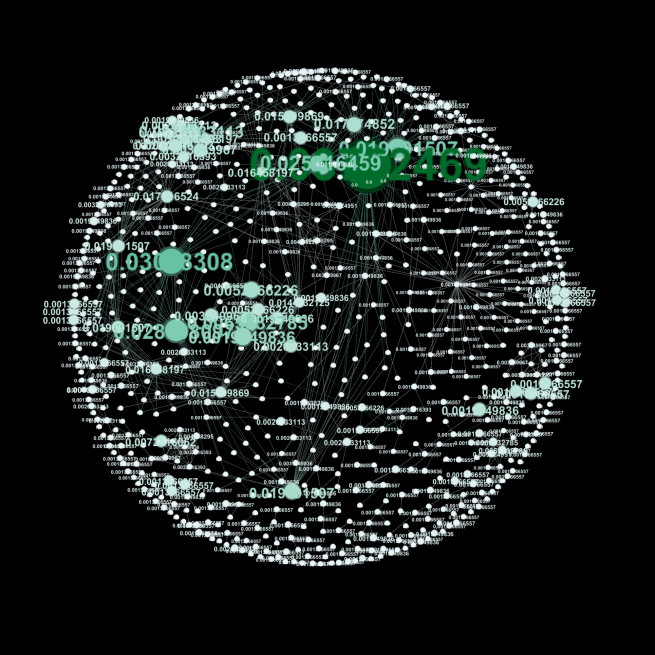

Pictured below is a multi-mode visualisation of an artificial blog network consisting of 4762 domains and 9849 connections between domains. Note that we collapsed each domain into a single node in order to simplify the visualisation. The practice of regarding all nodes within a single domain as a single node is not uncommon and has application as one of the steps in evaluation of query-dependent rankings.

The observed network is real (sent to us by a genuine spammer offering to buy links). Numeric values attached to each node in the graph represent the internally calculated PageRank score and have been used to scale and colour the nodes accordingly. Highlighted in green are the top link recipients within the collection.

Patterns in link networks such as the one above allow easy top-level detection of link schemes designed for ranking algorithm manipulation. Same principles for detection of relevance and topical similarity can be applied to look for abnormal patterns in the linking structures of any sub-set of the link graph. For example, here are the top perceived hubs within this network:

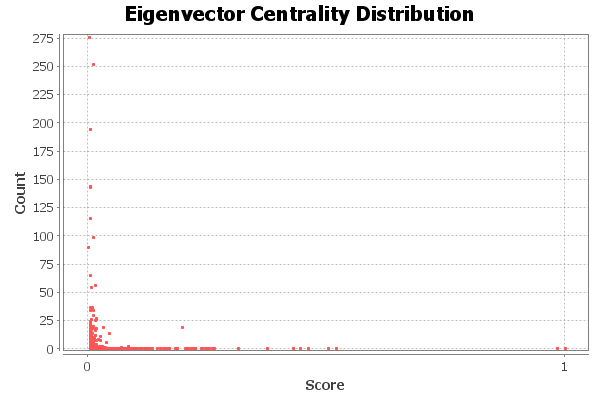

Artificial linking patterns are evident even after rudimentary visual examination, but further statistics can be extrapolated through several statistical parameters including:

- Network Overview:

- Average Degree (weighted / non-weighted)

- Network Diameter

- Graph Density

- HITS

- Modularity

- PageRank

- Number of weekly and strongly connected components

- Node Overview:

- Clustering Coefficient (including average)

- Eigenvector Centrality

- Edge Overview::

- Average Path Length

- Neighbourhood Overlap / Embeddedness

Note that in Google’s algorithm, PageRank plays the role of the dominant eigenvector of the probability matrix as part of the ‘random surfer model‘ [4].

Consider the number of options available for spam detection, all this without any aid of additional signals and metrics available including, anchor text, content and many other technical patterns. By introducing extra elements in spam analysis Google can predict with great certainty if a page exhibits unnatural structure or linking patterns.

Practical Takeaways

1) Linking out to authoritative content is a property of a hub. Hubs are important to search engines to define topic and categorise pages.

2) Search engines can infer topical relation between the two sites without an explicit link by using co-citation. Query relevant hub A links to both B and C so they must be also relevant to the search query. A virtual link between the two is formed.

3) Avoid artificial link structures as they are discoverable on many levels.

References:

[1] Henzinger, M. Link Analysis in Web Information Retrieval

[2] J. Kleinberg. Authoritative Sources in a Hyperlinked Environment. In Proceedings of the 9th Annual ACM-SIAM Symposium on Discrete Algorithms, pages 668–677, January 1998.

[3] Bastian M., Heymann S., Jacomy M. (2009). Gephi An Open Source Software for Exploring and Manipulating Networks. International AAAI Conference on Weblogs and Social Media.

[4] Page, L., Brin, S., Motwani, R., Winograd, T. (1999), The PageRank Citation Ranking Bringing Order to the Web.

Dan Petrovic, the managing director of DEJAN, is Australia’s best-known name in the field of search engine optimisation. Dan is a web author, innovator and a highly regarded search industry event speaker.

ORCID iD: https://orcid.org/0000-0002-6886-3211

Your plotted graphs are great and well describing the link building process through link graph, knowledge graph, social graph and co-citation graph. Thanks for sharing such a useful piece of information for link building analysis and their connectivity between each other.