PDF documents are abundant on the web. A quick search will return over one billion results from Google’s index and almost all of these documents will have outbound links. Surprisingly all evidence so far suggests that by design, PDF documents don’t circulate link signals the way HTML documents do.

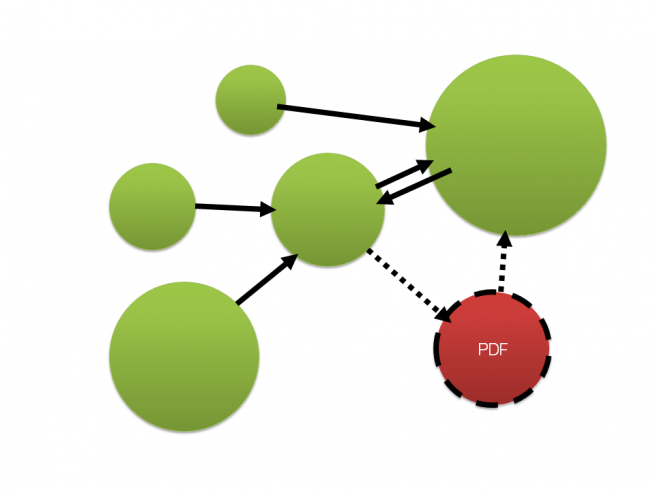

According to link graph theory, inbound links PDF documents receive are classified as dangling links and PDFs are treated as dangling nodes. This is due to absence of valid outbound links within this file type.

Google’s link graph processing excludes dangling nodes in its initial calculation cycle, consolidating their values in a secondary run. Implications on accuracy and the nature of PageRank of dangling nodes remain unclear.

Here’s an example of a PDF slideshow from a guest lecture I did at Griffith University last year. The URL has a PageRank of 2, it’s indexed. The link to my website in Google’s cache even renders as clean HTML. One would assume that since they can interpret PDF links and convert them to HTML they can also use them as part of their link graph.

I went on a hunt for any references and clarifications made directly by Googlers and found the following:

“We absolutely do process PDF files. I am not going to talk about whether links in PDF files pass PageRank. But, a good way to think about PDFs is that they are kind of like Flash in that they aren’t a file format that’s inherent and native to the web, but they can be very useful. In the same way that we try to find useful content within a Flash file, we try to find the useful content within a PDF file.

At the same time, users don’t always like being sent to a PDF. If you can make your content in a Web-Native format, such as pure HTML, that’s often a little more useful to users than just a pure PDF file.”

Source: Matt Cutts, Stone Temple Consulting

By Mark Traphagen via Steve Martin

“John Mueller says that Google will read links in any file (pdf,xls,doc,etc), but will not follow them with link juice. Only proper HTML anchor tagged links in files will pass link juice.“

Source: https://plus.google.com/+MarkTraphagen/posts/aAeAY13ujHx [hozbreaktop]

From this I conclude that PDF links are better compared with URLs found in Flash files or JavaScript. As with rel=”nofollow” links and written URL references, Google will use PDF links for document discovery, but they won’t be treated the same way as web native, HTML links.

SEO Implications

Webmasters hoping to maximise value of inbound links should ensure links go to their HTML assets. Any inbound links PDFs receive will help the ranking of the document itself, but the signals will not flow through to the rest of the publisher’s website, despite the presence of links within the PDF document.

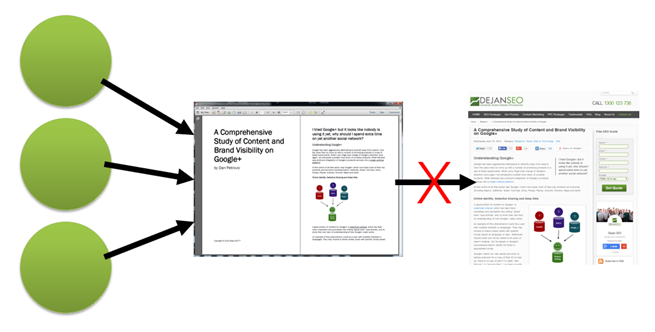

Illustration: PDF document receives PageRank from 3 inbound links, however the link within the PDF document doesn’t pass PageRank to its HTML counterpart which would in turn flow it through to the rest of the site and back out to the rest of the web through any external outbound links. The accumulated PageRank stays “trapped” within the PDF document.

I’ve published numerous pieces of content in PDF format considering it to be both an effective content container and a link earning medium. They were shared, hosted, distributed, embedded, linked to and even showed PageRank. In total my PDFs attracted 180 organic links from 109 domains. I expected the rest of my site to benefit from links pointing to PDF which in turn link to other parts of my site.

To learn that all the inbound links to my PDFs don’t actually help the rest of my site rank felt like a bit of a punch in the gut. I had to come up with a solution.

The Hack

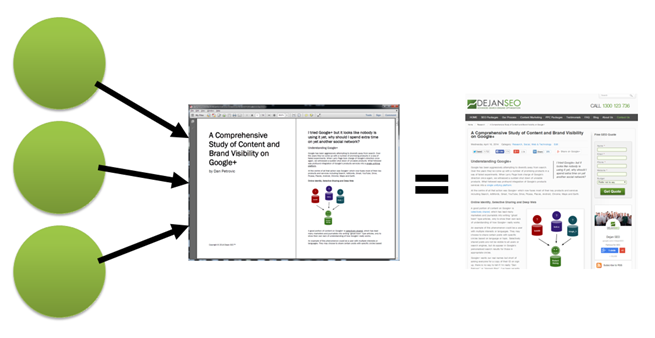

The idea for our hack came from Martin Reed who suggested to swap a PDF document with its HTML counterpart, replacing it in search results and hopefully liberating PageRank received through inbound links. We would then flow link signals through to the rest of the site using proper HTML links within the canonicalised document.

Illustration: PDF is canonicalised towards its HTML counterpart. Links pointing towards the PDF document are now contributing to PageRank of the HTML page, which can then pass it through to the rest of the site.

Method 1

.htaccess canonicalisation switch:

<Files "Choose-Dejan-SEO.pdf"> Header add Link '<https://dejanmarketing.com/media/html/Choose-Dejan-SEO/>; rel="canonical"' </Files>

Method 2

.php header canonicalisation switch:

header('Content-Type: application/pdf');

header('Link <https://dejanmarketing.com/media/html/Choose-Dejan-SEO/>; rel="canonical"');

readfile('Choose-Dejan-SEO.pdf');

Test Results

Our test URL was an old brochure, indexed, cached and showing toolbar PageRank 5. The end result was successful canonicalisation to a HTML equivalent in search results, transfer of social signals (+1s).

Screenshot: HTML page now appears in search results instead of the PDF.

By tracking Googlebot’s activities in an separate experiment we proved that Google will use PDF links to discover new pages and eventually index them. Unfortunately, TBPR hasn’t updated yet (and it may never update).

Long-Term Observations

Our first PDF link experiment took place in 2012 and it involved a research paper (PageRank 4) with two outbound links, one of which was our test page. Naturally, at the time our test page hadn’t been visited or linked from anywhere else. In over two years of testing the PDF failed to transfer any visible amount of PageRank over to our test page. Had that been a HTML link the target page would have received a PageRank 3 or at least PageRank 2 as a result, depending on whether it’s a strong or weak rounded PR4 (e.g. 3.6 – 4.4).

Practical Application

Most of our media is served through a CDN. Upon verifying dejanmarketing.com in Google Webmaster Tools we learned about all the traffic and links involved directly with our CDN resources and immediately decided to fix it.

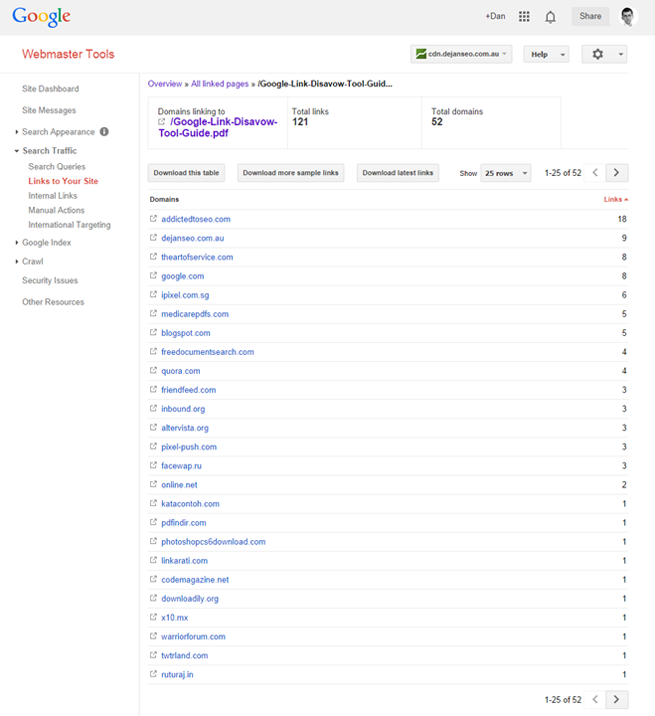

In the screenshot above you can see that Google selected a PDF version of our link disavow tool guide and it was the PDF that attracted links and not our HTML file:

Screenshot: Google Webmaster Tools for our CDN subdomain.

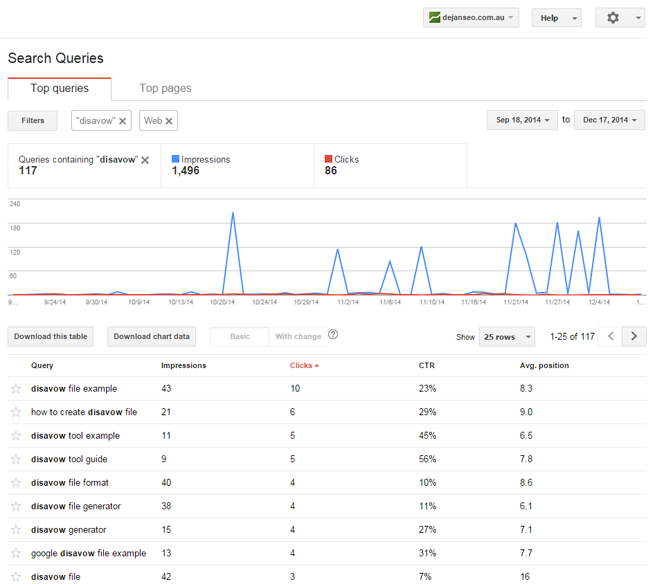

That’s 121 links from 52 different domains going the wrong way. We wanted to change that around and have applied the above mentioned hack to our .htaccess file and the switch happened within days. Now it’s our HTML page that gets discovered and linked to instead and we’re already seeing queries appearing in our main domain’s search traffic:

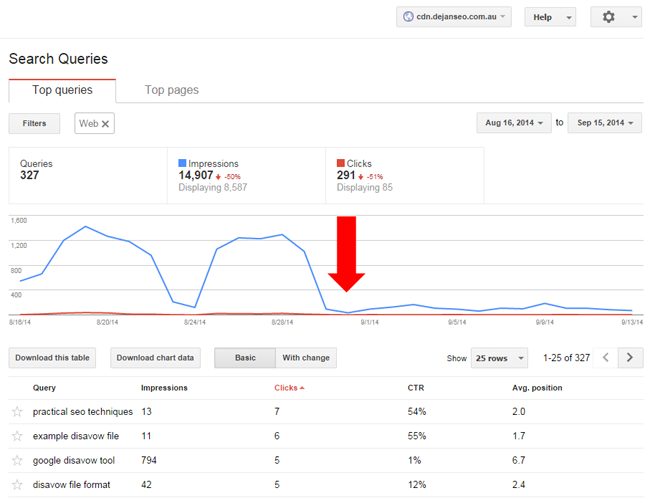

Naturally since canonicalisation, CDN’s search traffic showed a huge decline as Google switched search results to our primary domain:

Future Research

If PageRank of dangling nodes proves to be a post-processing estimate and a “cosmetic” value in toolbar PageRank, there is a chance these nodes may be “sterile” in terms of their ability to pass link signals, regardless of canonicalisation or redirects.

A follow-up experiment has been scheduled to test the impact of canonicalised PDF links on ranking. We’ll publish the results in 2015. [hozbreaktop]

References

The PageRank Citation Ranking: Bringing Order to the Web

Larry Page

“Dangling links are simply links that point to any page with no outgoing links. […] Because dangling links do not affect the ranking of any other page directly, we simply remove them from the system until all the PageRanks are calculated. After all the PageRanks are calculated, they can be added back in, without affecting things significantly. Notice the normalization of the other links on the same page as a link which was removed will change slightly, but this should not have a large effect. [..] After the weights have converged, we add the dangling links back in and recompute the rankings. Note after adding the dangling links back in, we need to iterate as many times as was required to remove the dangling links. Otherwise, some of the dangling links will have a zero weight.“

Mathematical Properties and Analysis of Google’s PageRank

Ilse C.F. Ipsen, Rebecca S. Wills

“If web page i has no outlinks then row i of H is zero. Such as web page, called a dangling node, can be a pdf file or a page whose links have not yet been crawled.”

http://mira.sai.msu.ru/~megera/docs/IR/search/pagerank/cedya.pdf [hozbreaktop]

Ranking the Web Frontier

Nadav Eiron, Kevin S. McCurley, John A. Tomlin

“Another reason for dangling nodes is pages that genuinely have no outlink. For example, most PostScript and PDF files on the web contain no embedded outlinks, and yet the content tends to be of relatively high quality. A URL might also be a dangling page if it has a meta tag indicating that links should not be followed from the page, or if it requires authentication.”

http://meyer.math.ncsu.edu/Meyer/PS_Files/ReorderingPageRank.pdf [hozbreaktop]

A REORDERING FOR THE PAGERANK PROBLEM

AMY N. LANGVILLE AND CARL D. MEYER

“It is well known that many subsets of the web contain a large proportion of dangling nodes, i.e., webpages with no outlinks. Dangling nodes can result from many sources: a page containing an image or a PostScript or pdf file; a page of data tables; or a page whose links have yet to be crawled by the search engine’s spider. These dangling nodes can present philosophical, storage, and computational issues for a search engine such as Google that employs a ranking system for ordering retrieved webpages.”

“We now turn to the philosophical issue of the presence of dangling nodes. In one of their early papers [2], Brin et al. report that they “often remove dangling nodes during the computation of PageRank, then add them back in after the PageRanks have converged.” From this vague statement it is hard to say exactly how Brin and Page compute PageRank for the dangling nodes. However, the removal of dangling nodes at any time during the power method does not make intuitive sense. Some dangling nodes should receive high PageRank. For example, a very authoritative pdf file could have many inlinks from respected sources and thus should receive a high PageRank. Simply removing the dangling nodes biases the PageRank vector unjustly.”

http://meyer.math.ncsu.edu/Meyer/PS_Files/ReorderingPageRank.pdf [hozbreaktop]

PAGERANK COMPUTATION, WITH SPECIAL ATTENTION TO DANGLING NODES

ILSE C. F. IPSEN AND TERESA M. SELEE

“Image files or pdf files, and uncrawled or protected pages have no links to other pages. These pages are called dangling nodes, and their number may exceed the number of nondangling pages.”

http://www4.ncsu.edu/~ipsen/ps/simax066433.pdf [hozbreaktop]

The effects of dangling nodes on citation networks

Erjia Yan and Ying Ding

“In the language of network analysis, dangling nodes denote the nodes without outgoing links. With the advent of the Web, the concept of dangling nodes became a common topic. It is well understood that most web pages link to and are linked by other pages. But it is possible that some pages do not contain any valid hyperlinks, which may be broken pages (i.e., those that formerly contained hyperlinks but have now become “403/404 Error”) or multimedia data types (i.e., PDF, JPG, PS, MOV).”

http://www.pages.drexel.edu/~ey86/papers/issi2011_submission_157.pdf [hozbreaktop]

Google PageRank

Professor Brian A. Davey, La Trobe University

“The problem is caused by the row of zeros in the matrix H. This row of zeros corresponds to the fact that P2 is a dangling node, that is, it has no outlinks. Dangling nodes are very common in the World Wide Web (for example: image files, PDF documents, etc.), and they cause a problem for our random web surfer. When Webster enters a dangling node, he has nowhere to go and is stuck. To overcome this problem, Brin and Page declare that, when Webster enters a dangling page, he may then jump to any page at random.”

http://www.amsi.org.au/teacher_modules/pdfs/Maths_delivers/Pagerank5.pdf [hozbreaktop]

Deeper Inside PageRank

AMY N. LANGVILLE AND CARL D. MEYER

“The pages of the web can be classified as either dangling nodes or nondangling nodes. Recall that dangling nodes are webpages that contain no outlinks. All other pages, having at least one outlink, are called nondangling nodes. Dangling nodes exist in many forms. For example, a page of data, a page with a postscript graph, a page with jpeg pictures, a pdf document, a page that has been fetched by a crawler but not yet explored–these are all examples of possible dangling nodes. As the research community moves more and more material online in the form of pdf and postscript files of preprints, talks, slides, and technical reports, the proportion of dangling nodes is growing. In fact, for some subsets of the web, dangling nodes make up 80% of the collection’s pages.”

http://www.cems.uvm.edu/~tlakoba/AppliedUGMath/for_talks/DeeperInsidePageRank.pdf

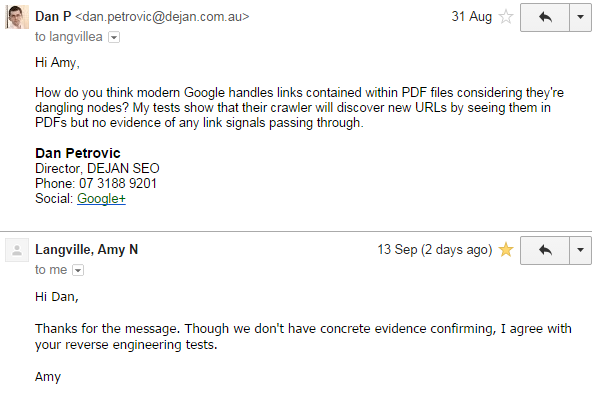

Note: I reached out to the co-author, Amy Langville and asked her whether she thought that modern Google may be handing PDF links differently today in comparison to what has been written more than a decade ago.

Amy agreed with my observations of Google using PDF links for URL discovery only, though she did state that we don’t have solid evidence confirming this. [hozbreaktop]

Dan Petrovic, the managing director of DEJAN, is Australia’s best-known name in the field of search engine optimisation. Dan is a web author, innovator and a highly regarded search industry event speaker.

ORCID iD: https://orcid.org/0000-0002-6886-3211