A ‘traditional’ link graph is a directed graph which consists of nodes connected by edges, a schematic representation of a document collection connected by directional links (A → B). Link-based search engines (such as Google) rely on directed link graphs to determine varying degrees of (query-agnostic) importance of pages in its index.

Undoubtedly, Google’s PageRank algorithm has evolved past the original random surfer model and gained additional sophistication but are they already taking advantage of the hypergraph model?

About HyperGraphs

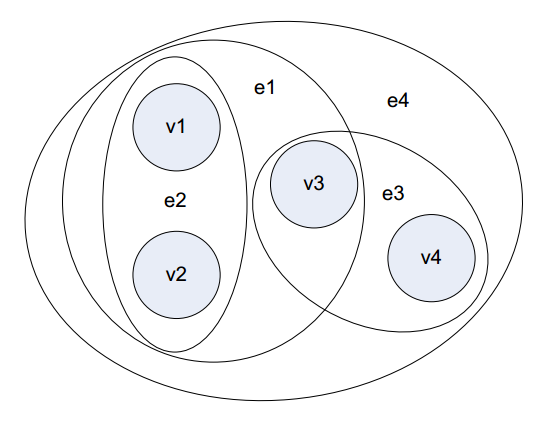

A hypergraph is a generalization of a graph ((https://en.wikipedia.org/wiki/Hypergraph)) in which a single hyperlink (hyperedge) can simultaneously link to two or more pages. In a recent paper ((Beyond Graphs: Toward Scalable Hypergraph Analysis Systems)) Heintz and Chandra argue that group interactions are better modeled by hypergraphs than traditional graphs. Below is an illustration of a hypergraph with four vertices (filled circles) and four hyperedges (enclosing ovals).

A hypergraph is derived by partitioning a set of pages from the original web graph by separating them into individual non-overlapping blocks. In other words it’s a way of segmenting the web into smaller sub-networks.

About HyperPagerank

During my research on practical applications of hypergraph in search engine algorithms I found an interesting paper published by a team of Brazilian researchers titled “A Hypergraph Model for Computing Page Reputation on Web Collections” ((A Hypergraph Model for Computing Page Reputation on Web Collections)).

The team identifies webspam as a major noise creator:

One of the main problems endured by link analysis strategies is to determine whether a link to a page can be considered as a vote for quality or not. Some links, such as navigational purpose links or spam links are noisy information in the web graph and can lead link analysis methods to wrong conclusions about the page reputation, when these links are considered as votes.

Their research was aimed at rendering the web as a hypergraph in order to reduce the impact of non-vote links when computing page reputation:

Links that do not represent votes for quality, such as Spam links and navigational links, are a problem to link analysis strategies since their importance has to be discounted when calculating page reputation.

HyperPagerank is then an adaptation of the original PageRank formula designed to work in a hypergraph environment and dependent on the block of pages the page is contained with. In translation, a pages’ PageRank is dependent on it’s link neighbourhood, not just on the PageRank of its inbound links and the reputation of a block of pages is the sum of all Pagerank of its pages. (for details see page 6 of the research paper)

Fighting Spam

The basic spam-fighting property of hypergraph-based link models is based on its partition criteria and the ability to control the influence of the individual page connections on a vote.

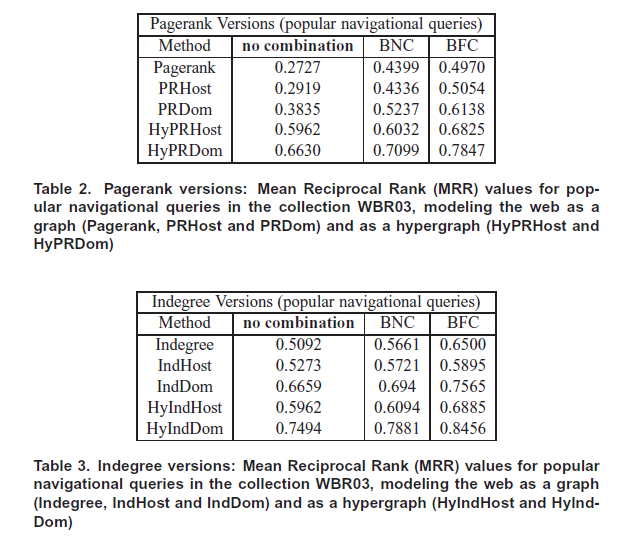

The method appears to be working really well with 27.8% improvement in search quality for popular navigational queries and 9.36% for randomly selected navigational queries.

Page-Level & High-Level Analysis

What’s interesting about the HyperPagerank method is that it combines both high-level and page-level reputation signals when computing page reputation. This is something search engine community has always assumed and experimented with, but without direct proof from Google (e.g. Domain Trust).

Brazilian researchers also state that they could partition the collection in such way that all the pages belonging to a link farm would be treated as a unique entity diminishing their influences.

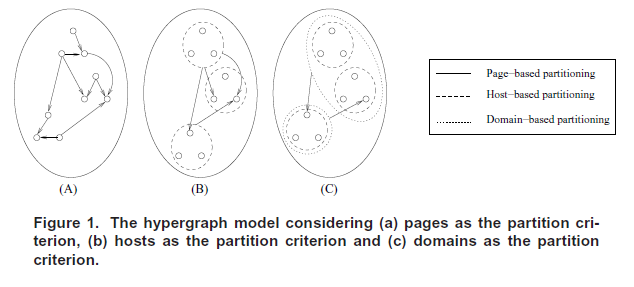

There are three partitioning criteria in the proposed hypergraph analysis method: page-based, domain-based and host-based:

Experimental Results

Experiments were created with real data using 12,020,513 pages, 141,284 domains, 999,522 hosts and 139,402,245 links. In total 32,414,004 hyperarcs connected on the host level and 1,906,879 on domain level.

The experimental results using Hypergraph/HyperPagerank were superior to the Graph/Pagerank method.

Future Direction

The research team which created HyperPagerank concept intends to examine content and relationship independence between pages instead of using hierarchy as a guide.

Bonus Material

Discussion with Linas Vepstas

Dan: How is it that modern search engines base their algorithms on graphs and not hypergraphs?

Linas: Why? Many reasons, I suppose. History, familiarity, tools, insight. Ordinary graphs are a standard part of school curriculum, are straightforward to understand in all their varieties (labelled, unlabelled, directed, undirected, etc.) Hypergraphs are very rarely taught; most people never heard of them. The standard terminology for a hypergraph is a stumbling block: how can an “edge” have more than two endpoints? it takes a while to get comfortable thinking in this way.

Standard graph theory rarely deals with hypergraphs; so ordinary graphs have adjacency matricies, and generalizations thereof, e.g. Markov chains. The analogous constructions for hypergraphs are considerably more complex — err — well, they could be, except that every hypergraph can be written as a graph, and so hypergraphs are not actually “more general” than graphs. In this sense, they are actually a subset of graphs, those having a certain tree-like shape (or bipartite, if they are just one level deep).

A lot of people use hypergraphs without really knowing or realizing that they are doing so. Its almost “too simple” … so, whenever someone is using a tree aka Directed Acyclic Graph (DAG), they are in fact using a hypergraph — a hypergraph is just a collection of DAG’s; nothing more, nothing less. So, for example, in natural language processing, DAG’s occur all over the place. DAG’s are also how compilers and virtual machines represent assembly instructions, subroutine calls, etc. DAG’s occur everywhere in logic: a boolean expression of AND’s and OR’s is just a tree, a DAG; a bunch of boolean expressions is just a hypergraph. All computer programs are trees … obviously so, when its scheme or lisp or haskell, perhaps less obviously so in python or perl.

So .. “natural applications”? All over computer science; they’re just absolutely everywhere. If you’ve got DAG’s or trees, you’re defacto dealing with hypergraphs, even if you don’t know it.

Knowing it does make certain design choices clearer and easier to talk about. I know of a certain natural language system where the author wrote it using trees with labelled edges. At the time, he did not understand hypergraphs, and said he regretted this, as certain linguistic structures are far more easily/naturally represented as hypergraphs than as the certain particular labelled tree design that he chose.

Dan: My puzzle is around adaptation of the random surfer model or PageRank algorithm with the dampening factor applying at each step, or each edge between the two nodes in a directed graph given that one edge connects more than one node? How do you calculate differences in reputation between all the documents in a collection?

Perhaps the main advantage is the speed of processing and not the algorithm effectiveness itself.

Since you mention natural language processing, it strikes me that Google could already be using hypergraphs in their voice search. I wrote a little bit about how I see their human-computer interaction evolve over time here:https://dejanmarketing.com/conversations-with-google/ but at the time didn’t realise they were just about to release “conversation search”:http://searchengineland.com/ok-google-hands-free-conversational-search-159627

Linas: I don’t know how to answer your reputation/page-rank question, mostly, I suppose, because the answer is “it depends”. That, and it probably requires deeper thought and some experimentation …

Handy Links:

http://blog.opencog.org/2013/03/24/why-hypergraphs/

http://opencog.org/about/

Dan Petrovic, the managing director of DEJAN, is Australia’s best-known name in the field of search engine optimisation. Dan is a web author, innovator and a highly regarded search industry event speaker.

ORCID iD: https://orcid.org/0000-0002-6886-3211

Interesting. It’s a commonly agreed upon conclusion among SEOs that repeat links from the same domain or same host have diminishing value. The number of links from unique RDs and unique hosts also correlates better with Google rankings than number of links from unique pages. Doesn’t that suggest that a concept similar to the hypergraph representation has been used by Google for years?

These data show that things are getting very good

It’s about time something like this is being done. The older form of PR is/has been used and abused..

A little touch to the future 🙂 Nice insights!

Hello Dan,

This is a very interesting post. It’s amazing they were able to combine the high level and page-level reputation signals for getting the results of page computation. Indeed, these Brazilian researchers are so brilliant. This HyperPagerank concept will eventually help further analysis that could lead to more productive SEO techniques. Thanks for sharing!